What 100,000 Simulated Tournaments Tell You About March Madness

Kalshi just launched a $1 Billion Perfect Bracket Challenge. A billion for perfection and a million for the highest-scoring bracket if nobody gets it perfect. Submissions close Wednesday.

Let’s get this out of the way right off the bat. Nobody is winning the billion.

The odds of a perfect bracket are roughly 1 in 9.2 quintillion (that’s a nine followed by 18 zeroes in case you were wondering).

The million-dollar secondary prize for the highest-scoring bracket? Now that’s where having better-calibrated probabilities than the rest of the field actually matters.

After brackets opened, I built a quantitative system to see what the data says about how to fill it that includes:

A Monte Carlo simulator that plays the tournament 100,000 times using 40 years of historical seed-matchup data.

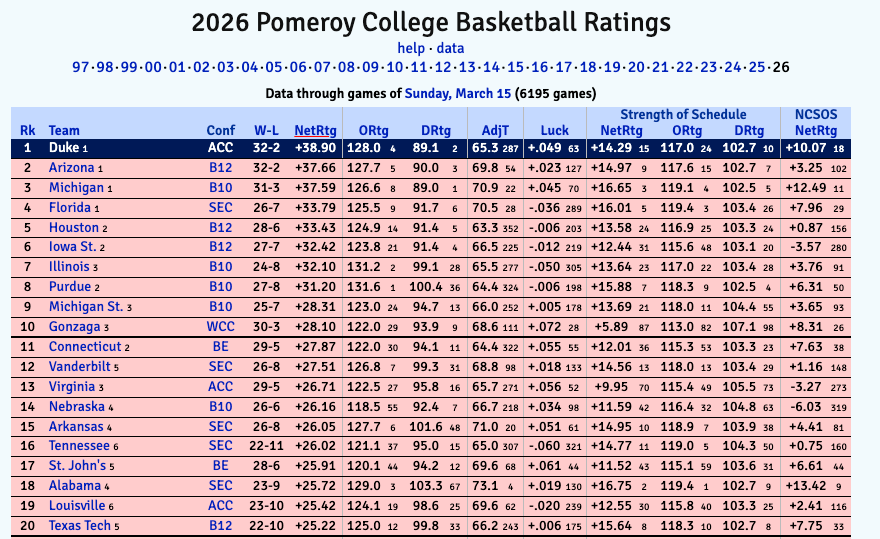

A KenPom Log5 model bracket that uses tempo-adjusted efficiency ratings for every game-by-game matchup.

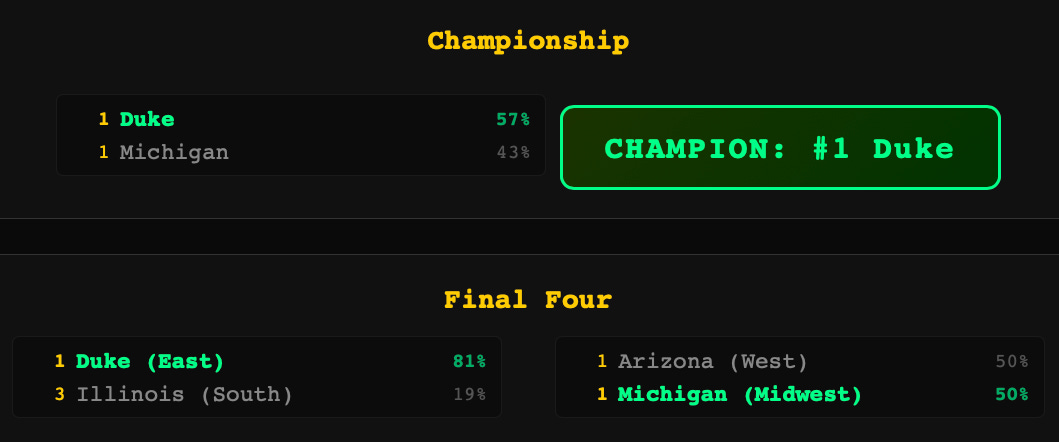

Two bracket modes that produce two different champions - and we built a hybrid approach.

Here’s what we found.

Two Brackets, Two Champions

I ran the KenPom Log5 model in two modes and they tell very different stories. The gap between them is the whole argument for why a single bracket prediction is insufficient.

Chalk mode picks the higher-probability team in every single game. No exceptions.

The result: Duke wins the championship over Arizona (56/44%), with just 5 upsets across the entire bracket.

Final Four: Duke (East), Florida (South), Arizona (West), Michigan (Midwest). This is the “if the better team always wins” scenario.

Weighted mode introduces realistic variance. Instead of always picking the favorite, it selects winners probabilistically. A team with a 70% win probability wins 70% of the time, not 100%.

The result: Arizona wins the championship, Houston crashes the Final Four as a 2-seed by knocking off Florida in the Elite 8, and 11 upsets occur across the bracket.

Same model. Same data. Different champions. How?

The championship matchup is identical in both modes: Duke 56%, Arizona 44%. In chalk, 56% always beats 44%. In weighted, that 44% hits sometimes. And in this particular weighted run, it hit. Arizona won the coin flip.

But the paths also change. In weighted mode, Houston knocks off Florida in the Elite 8, which never happens in chalk because Florida is the favorite. That gives Arizona a 70% win probability semifinal against Houston instead of a tougher matchup against Florida.

Variance in one game ripples through the entire bracket.

This is why a single bracket is a bad prediction tool.

Duke is the most likely champion in any individual game. But “most likely” at 56% means Arizona still wins 44 out of every 100 times they play. The only way to capture that uncertainty is to run the tournament thousands of times and look at the full distribution of outcomes.

What 100,000 Simulations Reveal

A single bracket is one guess. One path through 9.2 quintillion possible outcomes. It’s like flipping a coin 63 times, writing down the results, and calling it a prediction.

We did something different. We played the entire tournament 100,000 times on a computer. Every game. Every matchup. Every possible upset. Each time, the outcomes were weighted by the actual probability of each team winning. Then we counted what happened.

Duke won about 12,000 of those 100,000 tournaments. So did Michigan. So did Arizona. So did Florida. That means each of them has roughly a 12% chance of cutting down the nets. Not 50%. Not 30%. Twelve percent.

If anyone tells you they know who’s winning this tournament, they’re guessing.

The math says it’s a four-way coin flip at the top.

Here’s what else 100,000 simulations show you that a single bracket never could:

A #1 seed wins it all 48.1% of the time. Less than half. The most likely outcome is that a team seeded 2nd or lower wins the championship.

The average tournament produces 8.3 first-round upsets. Most people put 2 or 3 upsets in their bracket. The math says you’re not being bold enough.

The Cinderella Metric: there’s an 82.8% probability that at least one 12-seed beats a 5-seed. A 44.8% chance that two or more pull it off. The question isn’t whether Cinderella shows up. It’s which one.

That’s the power of running it 100,000 times. You stop asking “who do I think wins?” and start asking “what does the full range of outcomes actually look like?” The answers are usually less certain, more interesting, and more useful than any single prediction.

How to Actually Fill Out a Bracket

(A Framework, Not a Pick Sheet!)

So which model do you use? Chalk or weighted?

Neither. At least not directly.

Chalk maximizes correct picks. But bracket contests aren’t solitaire. You’re competing against millions of entries, and most of them are chalk-heavy. If Duke wins and you picked Duke, so did 80% of the field. You didn’t gain ground on anyone.

Weighted mode is closer to the right instinct, but random variance isn’t strategic. You don’t want to pick upsets randomly. You want to pick them where the model sees something the crowd doesn’t.

The optimal strategy is chalk as your base, with selective contrarian picks in high-conviction spots. Take the favorite where the model and the crowd agree (all four 5-vs-12 matchups this year, for example). Then go contrarian where the model says a lower seed is undervalued: Santa Clara over Kentucky, VCU over North Carolina, Houston over Florida in the Elite 8. Those are the picks where being right leaps you past the field.

Think of it like portfolio construction. You don’t want a portfolio that mirrors the index. That’s chalk. You don’t want random deviation. That’s weighted. You want targeted active bets where your information edge is largest. Everything else stays at the benchmark.

Where the Model Goes Contrarian

The weighted bracket produced 11 upsets. Not all of them are worth taking in a bracket contest. Here are the ones where the model’s conviction is highest and the crowd ownership is lowest:

Santa Clara over Kentucky (10 vs 7): Santa Clara’s efficiency profile is closer to a 6-seed than a 10-seed. Kentucky is a name-brand 7-seed that most casual brackets will advance without thinking. High differentiation value.

VCU over North Carolina (11 vs 6): North Carolina is another name that casual brackets auto-advance. VCU’s defensive metrics create matchup problems. Low crowd ownership on the upset side.

Utah State over Villanova (9 vs 8): Kalshi’s own bracket shows this at 56/45%, essentially a coin flip. The model agrees it’s close but leans Utah State. Most brackets will default to Villanova on name recognition.

Houston over Florida (E8, 2 vs 1): This is the biggest swing pick in the bracket. Houston’s defensive efficiency is elite, and their path through the South gives them favorable matchups leading up to the Elite 8. If Houston beats Florida, most brackets are destroyed. If you have it, you’re way ahead.

Virginia over Iowa State (S16, 3 vs 2): Iowa State is a popular pick to make a deep run. Virginia’s efficiency data says they’re closer to a 2-seed than their 3-seed label suggests. Another spot where most of the field is on the wrong side.

Notice what’s not on this list: any of the 5-vs-12 upsets. The model is emphatic that all four of those matchups favor the 5-seed by 15-21 percentage points. The “always pick a 12-seed” advice that circulates every March? This year, it’s a trap.

How We Built This

A disclosure that matters: we built the entire system with Claude Code over a weekend. No prior Python experience. No quant background. The stack includes a 40-year historical base rate lookup table, a Monte Carlo simulator, a KenPom Log5 bracket generator with chalk and weighted modes, and a signal engine that pulls live data.

We mention this because it’s relevant. The tools to do this kind of analysis didn’t exist for non-engineers 18 months ago. Domain expertise plus AI implementation is a new kind of competitive advantage. The domain knowledge came from years in financial services. The engineering happened in a weekend.

If you’re a financial advisor, an analyst, or anyone who thinks quantitatively about problems, the barrier between having a question and having a system that answers it has never been lower. March Madness was a fun application. The methodology applies everywhere.

The Bottom Line

The chalk bracket says Duke.

The weighted bracket says Arizona.

The Monte Carlo simulations say it’s genuinely a four-team race at 12% each, with meaningful probability on 2-seeds and 3-seeds that most people aren’t considering.

My agent used a hybrid approach and arrived at this Final Four + Championship pick.

The lesson? If you’re filling out a bracket at your office or on a platform like Kalshi, don’t go pure chalk and don’t go random.

Start with the favorites.

Then go contrarian in the spots where the efficiency data says the crowd is wrong.

That’s where you separate from the field. That’s where the million-dollar bracket lives.

Process over predictions. Frameworks over gut feelings. Data over vibes.

Can’t wait to keep y’all updated! See you on the court 🏀

Matthew Snider is the founder of Block3 Strategy Group, author of “Warren Buffett in a Web3 World,” and publisher of the BitFinance newsletter. He holds a Series 65 and MBA, and has been an active participant in digital asset markets since 2015. This article is for educational purposes only and should not be considered financial advice. Always consult with a qualified professional before making investment decisions.