I Spent an Afternoon Talking About AI With a Local Nonprofit. Here’s What I Learned.

About a month ago, a faith-based nonprofit here in Durham asked me to come spend an afternoon with their operations team and talk about AI. Earlier this week, ten of us gathered in the parish library (with a few more joining online) and the brief was simple.

Help us understand the landscape.

Show us where the pitfalls are.

Let’s brainstorm what this could mean for the work we actually do.

I’ll tell you up front that this is the kind of work I find the most rewarding, and I want to share what came out of it. Not because the conversation was unique, but because it surprised me how universal the questions were.

If your organization is anywhere near this conversation right now, the patterns we ran into are probably your patterns too.

The Frame: Nobody Wants to Be a Technologist

Before we touched a single tool, I wanted to make the why clear. Nobody in that room signed up to become a technologist.

The organist isn’t trying to outsource the music.

The parish administrator isn’t looking to be replaced by a chatbot.

The pastor isn’t here to learn prompt engineering as a second vocation.

What we’re trying to do is much smaller, and much more useful.

We’re trying to give people back time. Time is the most valuable resource any organization has, especially a small one whose mission depends on being present in the community. If a tool can take an hour of administrative friction off someone’s plate every week, that’s an hour they can spend with the people in the community they’re actually here to serve.

That framing changed the energy in the room. Once it was clear we weren’t talking about replacement, we could talk honestly about everything else.

Touring the Tools

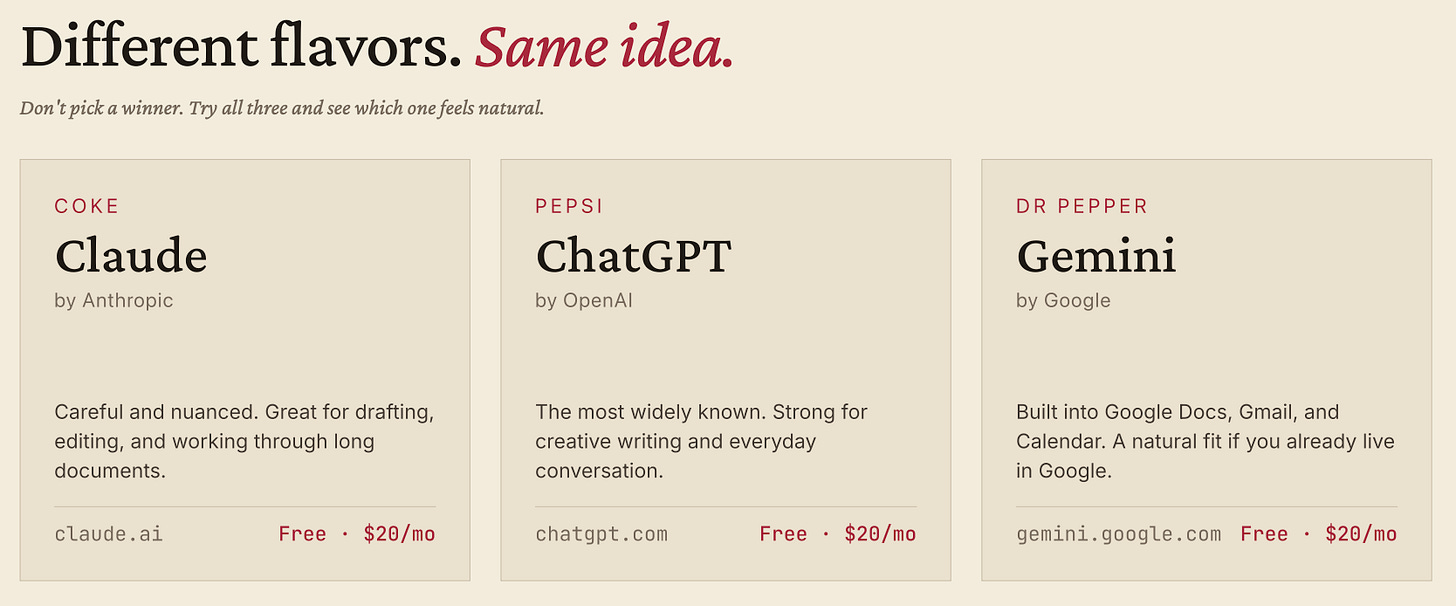

We walked through the major players. ChatGPT, Claude, Gemini, Grok. I tried to be even-handed, but you can’t have this conversation without acknowledging that ethics come up almost immediately. There were real concerns about OpenAI’s governance, about Grok’s content posture, and about Gemini in a corporate context where the data ends up entangled with a search business.

I want to be clear that nobody in the room treated Claude as above that fray either. The concerns about training data, energy use, and concentration of power apply to every major AI company, Anthropic included.

What I noticed wasn’t a verdict that Claude is the “ethical choice,” but a tendency for people who care about these trade-offs to land on whatever option fits best with the rest of their values. Most people in the room had started on ChatGPT because that’s what showed up first, and many had migrated to Claude over the past year, partly for the features and partly because the company’s posture on safety and governance felt more aligned with how they think about their other choices.

Then we opened the floor up for discussion.

People shared what they’d already tried, what had worked in their personal lives, what had failed in a professional context. This was the part of the workshop I learned the most from.

Someone had used AI to draft a difficult email and then second-guessed whether that was the right move.

The organist was using it to find and curate new music associated with a given week’s theme’s.

Another person was using it to summarize long PDF reports they didn’t have time to read end to end.

Hearing each other share normalized the experimentation. The beginners realized they weren’t behind, and the heavier users realized their workflows weren’t as obvious as they’d assumed. There’s a lesson in there about why these conversations work better in a room than in a Slack channel.

Where AI Could Actually Help

I came in with six pre-formed ideas about where this technology could meet their actual operations. We worked through each one together.

The first was weekly bulletins and communications. Service materials, recurring announcements, the kind of templated work that eats hours every week when it shouldn’t. Drag and drop a few files, get a clean draft back, edit, ship it. The time adds up faster than people realize.

The second was meeting notes and institutional memory. Governance bodies forget. Action items get lost. Three years from now, nobody remembers why a particular policy decision was made. AI-assisted note-taking creates a searchable log that doesn’t depend on any one person being in the room, and it means nothing slips through the cracks.

The third is the one I wasn’t sure we’d end up discussing, and it turned out to be one of the most thoughtful parts of the conversation: liturgical planning and worship prep. Lectionary research, prayer drafts, hymn ideas. The key word is draft. Nobody in that room wanted AI writing the rector’s sermons or replacing the creative and prayerful work that goes into worship. What they wanted was a faster on-ramp to the research, so the human work could happen sooner and better.

The fourth, and the one I think is the biggest opportunity for nonprofits anywhere, is grant proposals and funding applications. Every grant is different. The qualification criteria are buried. The actual writing takes hours. AI can compress all of it. It can scan opportunities, flag fits, draft narratives and budget justifications in funder-tailored language, and free a small staff to actually pursue more funding instead of being gated by the writing bottleneck. If there are companies building tools specifically for this market, they’re not building them loudly enough. The need is enormous, and a previous nonprofit I worked with would have benefited from exactly this.

The fifth was event planning and volunteer coordination. Timelines, volunteer ask emails, checklists, run-of-show documents. The work that’s usually scattered across five tabs, three inboxes, and somebody’s head becomes structured in a single place. For an organization that lives and dies by events, that compounding is real.

The sixth was data logging and record keeping. Pastoral visits, pledges, attendance, facility needs. Most of this information already exists somewhere in the organization, but it’s fragmented across spreadsheets, paper files, and individual memory. Bringing it together into a single accessible place means the next question someone asks can actually be answered, rather than estimated.

The Ethics Conversation

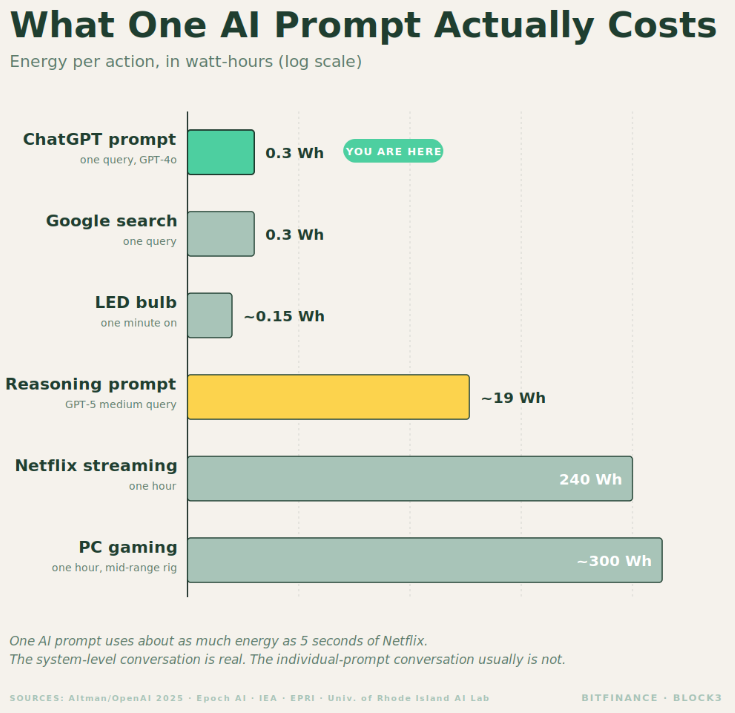

We saved time at the end for the ethical concerns, because they deserved a real airing. Energy use, water use, the larger questions about what AI is doing to the labor market and to creative industries. None of that goes away by ignoring it.

My honest view, which I shared with the room, is that the marginal individual use of AI is more like the marginal use of a car than people realize. One person driving doesn’t cause climate change. The system of cars, manufacturers, and regulations does. The same logic applies here. The pollution conversation is a system-level conversation, and the lever a small nonprofit has is much bigger when applied to its mission than to its individual prompt count.

I also pointed out that the largest water user in North Carolina is something most people never think about: thermoelectric power generation. Power plants quietly withdraw billions of gallons every day to cool turbines, and almost nobody talks about it because it’s been there forever. That’s less a defense of AI’s footprint than a reminder that the framing we use to evaluate impact matters, and that focusing on the visible newcomer while ignoring the entrenched incumbent is rarely how good policy gets made.

There are still ways to use these tools more thoughtfully. Don’t generate AI image and video slop. Those are by far the most computationally expensive operations, and the marginal value is usually low. Combine prompts when you can. Re-use outputs instead of regenerating them. The same discipline that makes the work better tends to make the energy footprint smaller.

Two Kinds of People

The closing reflection I’ll leave you with is the one I gave the group. There are really only two kinds of people in this conversation. The curious and the helpful. The curious are willing to sit with a tool they don’t fully understand, ask one more question, try one more workflow. The helpful are the ones who, once they understand it, turn around and bring everyone else along.

You need both.

A community of curious people without helpful people just generates anxiety.

A community of helpful people without curious people stops learning.

The goal of an afternoon like this is to make sure every room has some of both.

This is the kind of work I love doing, and it’s a meaningful piece of what Block3 was built to do. If your team, nonprofit or otherwise, is on the brink of this conversation and wants someone to walk through it with you, I’d be glad to talk. Reach out.

Matthew Snider is the founder of Block3 Strategy Group, author of “Warren Buffett in a Web3 World,” and publisher of the BitFinance newsletter. He holds a Series 65 and MBA, and has been an active participant in digital asset markets since 2015. This article is for educational purposes only and should not be considered financial advice. Always consult with a qualified professional before making investment decisions.