5 Lessons Backtesting Taught Me That Every Investor Should Demand From Their Fund Manager

Originally, I set out to have an AI agent make trades for me autonomously. Little did I realize that I would need to build a due diligence framework when real money was going to be put to work.

But after ~3+ months of building, testing, and killing strategies with an AI coding partner, I started noticing something.

The mistakes I was catching in my own work were the same mistakes I’d seen in pitch decks, fund presentations, and quantitative strategy marketing materials for years.

The difference was that now I had the tools to see through them.

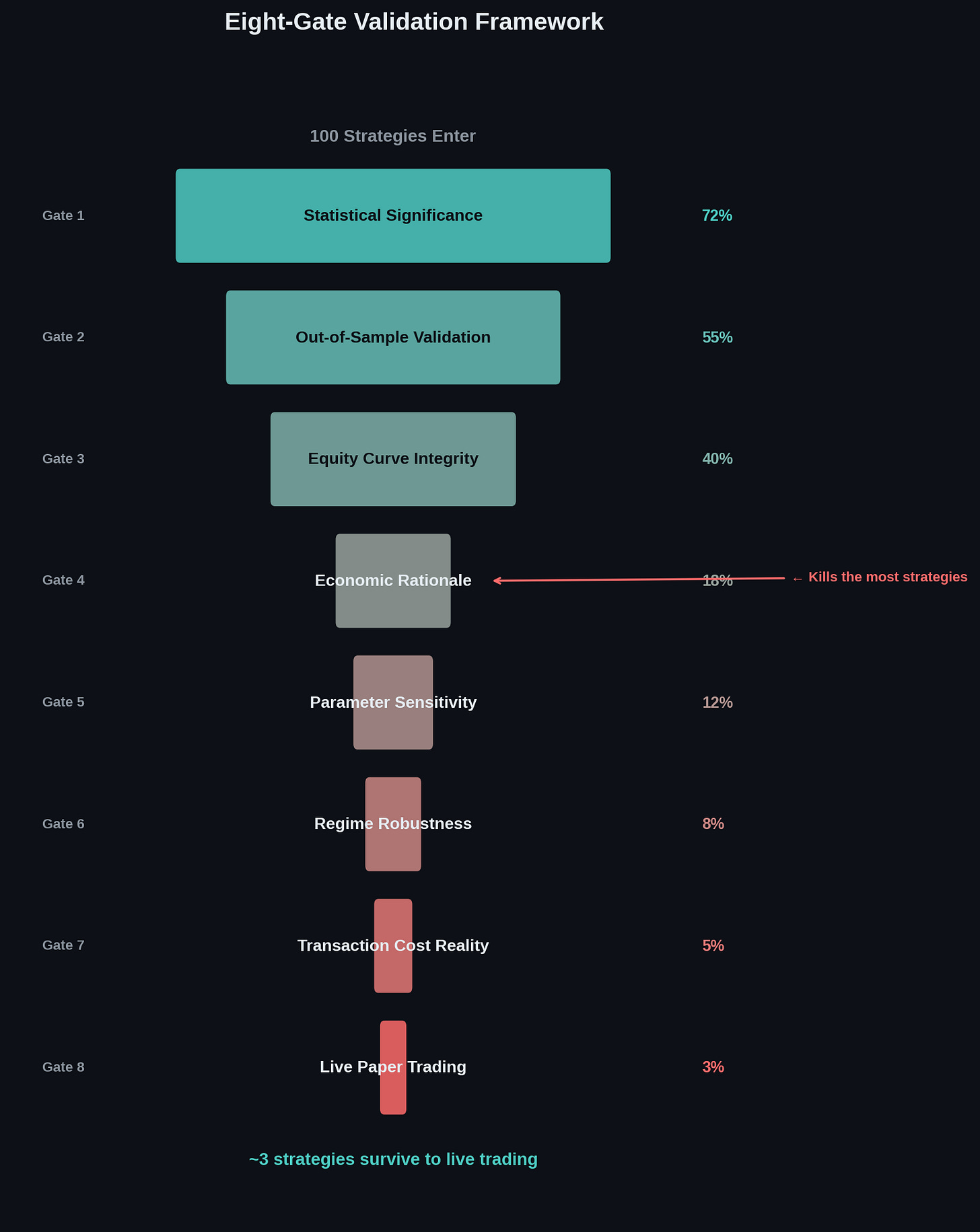

Last week for example, I ran a test against a viral 79% win rate trading bot through my 8-Gate Validation Framework. It failed at Gate 1. The equity curve looked incredible. The underlying model was predicting at the level of a coin flip.

As I continue to build and test, I’m seeing this more and more from influencers claiming to have the latest AI agent money maker.

That teardown crystallized something I’ve been thinking about for weeks. The same red flags that kill quantitative strategies are the same red flags that should concern any investor placing capital with a fund manager, a strategy provider, or an advisor touting systematic returns. Most people just don’t know what questions to ask.

Here are 5 things backtesting taught me that I think every investor deserves to know.

(if you haven’t already, share this with a friend or subscribe yourself!)

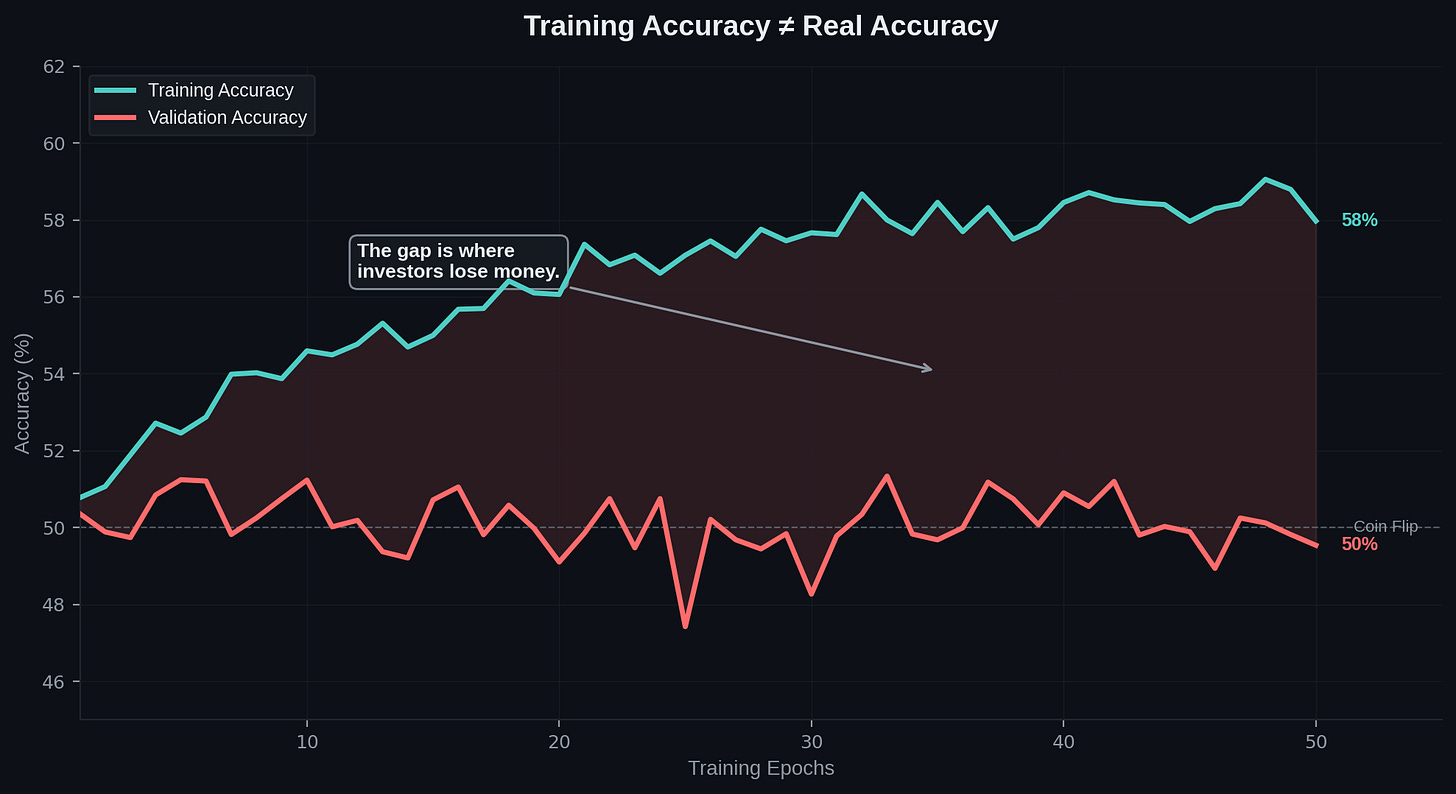

1. Training Accuracy Is Not Real Accuracy

This is the most important lesson in quantitative finance, and it’s the one most people never learn.

When someone shows you a strategy that performed at 79% accuracy or returned 40% annually, the first question is not how much. It’s on what data.

Training accuracy measures how well a model explains the past. Out-of-sample accuracy measures how well it predicts the future. These are fundamentally different things, and the gap between them is where most investors lose money.

The viral bot we tore down last week had training accuracy climbing steadily to 59%. Its validation accuracy never meaningfully departed from 50%. That’s a coin flip. The model was memorizing historical noise and learning nothing generalizable.

In backtesting, the number that matters is always the one measured on data the model has never seen. If someone can’t produce out-of-sample results, that tells you everything you need to know.

Question to ask: Can you show me the out-of-sample performance, not just the backtest?

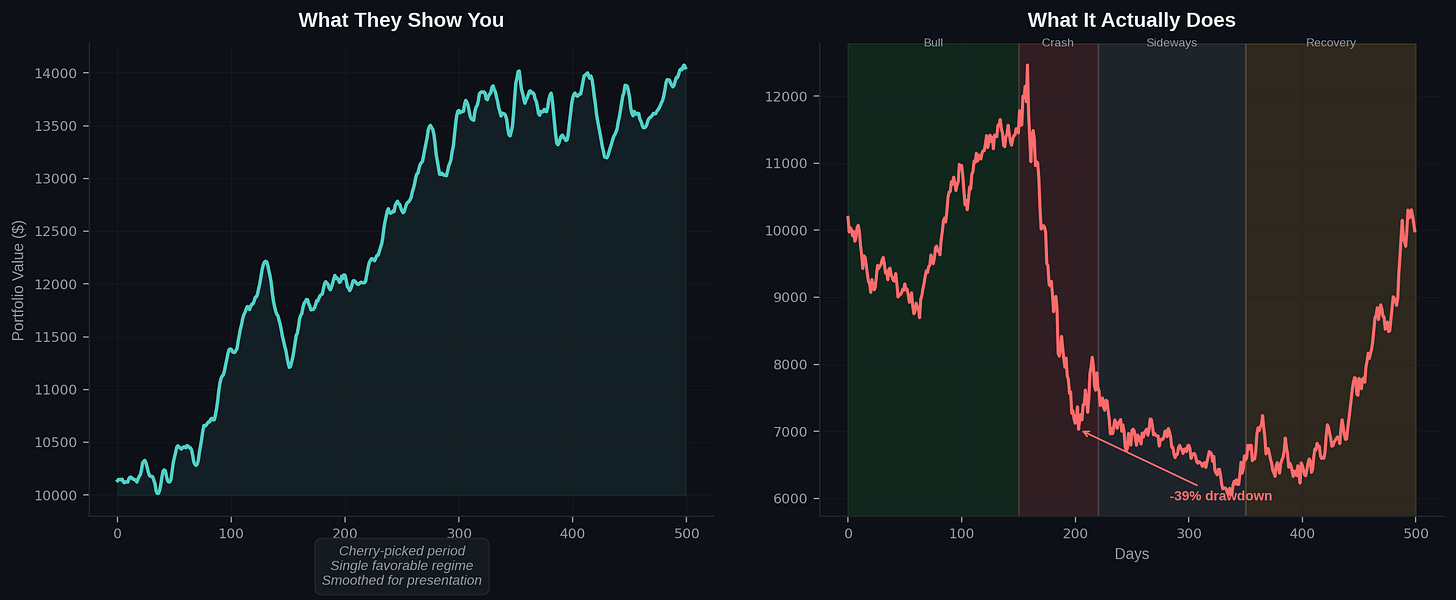

2. A Beautiful Equity Curve Can Lie

Equity curves are the single most persuasive visual in fund marketing. It’s alluring to investors when a you can show a line that goes from the bottom-left to the top-right of a chart.

It looks like proof.

It feels like proof.

It often isn’t.

There are at least 3 ways a curve can look incredible and mean nothing.

Look-ahead bias. Technical indicators calculated using data that includes the prediction target. The model gets to peek at the answer sheet.

Single-path bias. The backtest tests exactly one historical sequence. A strategy that looks brilliant from 2021 to 2023 might fall apart if you shuffled the order of market events.

Selection bias. Cherry-picking the most confident signals in a broadly rising market will outperform random selection. That’s not alpha. That’s a leveraged long with extra steps.

The fix is straightforward in principle: test across multiple market regimes. Bull markets, bear markets, sideways grinds. If a strategy only works in one environment, it doesn’t have an edge. It has a coincidence.

Question to ask: Was this strategy tested across multiple market regimes, or just one favorable period?

3. If They Can’t Explain the Edge, There Is No Edge

This is the most underrated test in quantitative finance. And frankly it’s the simplest.

Every model or strategy needs to be better than some benchmark of have some repeatable, provable way to generate “alpha” where other managers can’t.

The way that we get alpha is be finding a market inefficiency for the strategy to exploit.

Not: what does the model predict.

Not: what indicators does it use.

What structural or behavioral force in the market creates the opportunity this strategy captures? If the answer is “the model found patterns in the data,” that’s not an edge. That’s pattern-matching on historical noise and hoping it repeats.

We call this a “borrowed edge.” (aka a house of cards!)

Borrowed edges disappear the moment conditions change because there was never a durable mechanism supporting them. A real edge has an economic rationale. Momentum works because of behavioral anchoring and institutional herding. Mean reversion works because of liquidity-driven overreaction. Value investing works because markets systematically misprice long-duration assets.

If someone managing your money can’t articulate why their strategy works in plain language, the strategy probably doesn’t work. It just hasn’t failed yet.

Question to ask: What specific market inefficiency does your strategy exploit, and why do you expect it to persist?

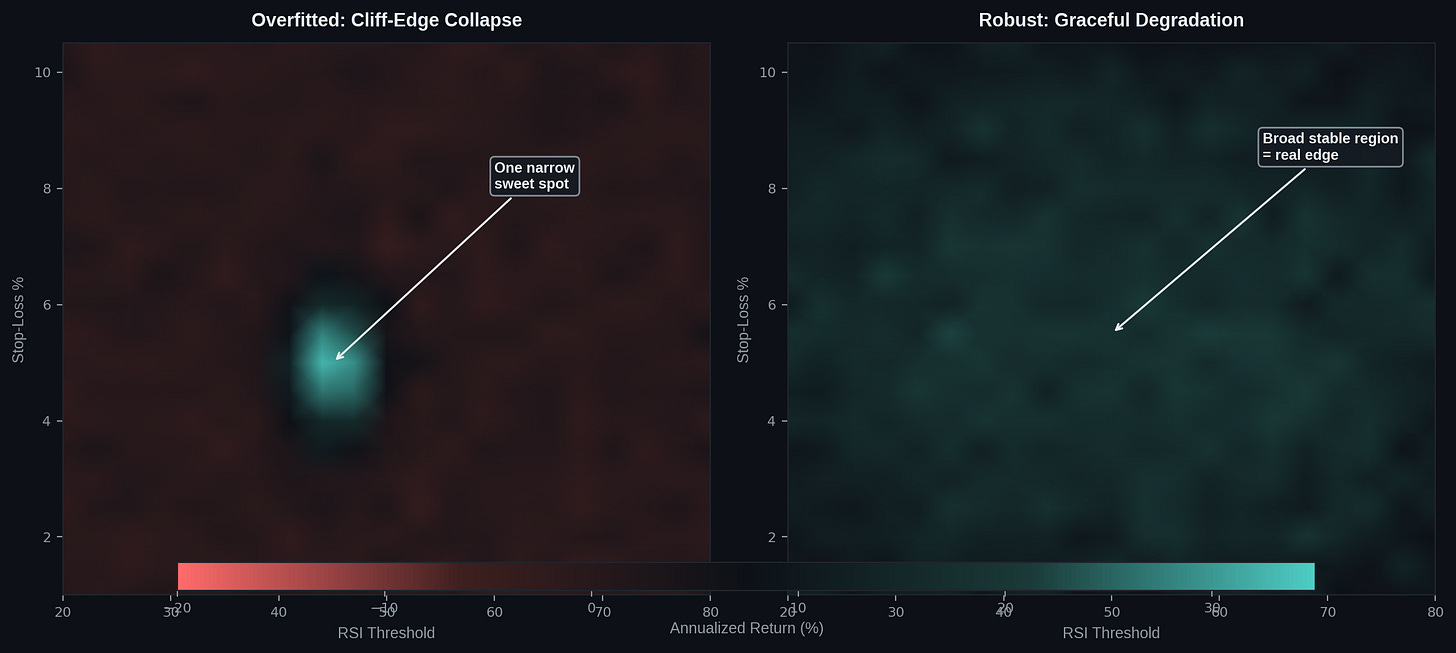

4. Parameter Sensitivity Tells You More Than Returns

Here’s a test that takes about thirty seconds and will save you from bad strategies more reliably than any due diligence questionnaire.

Change the key parameters by 20% in both directions. If the strategy collapses, you’re looking at an overfitted model.

Early in my own build, I found parameter combinations that produced gorgeous backtesting results. Smooth equity curves, strong returns, low drawdowns. I was ready to deploy. Then I moved the sliders. A 20% change in the RSI threshold or the trailing stop percentage and the entire strategy fell apart. It wasn’t robust. It was decorative.

A strategy with real edge shows graceful degradation when you adjust parameters. Performance declines gradually, not catastrophically. If there’s one narrow configuration that works and everything around it fails, the model has memorized a specific set of historical conditions. It hasn’t learned anything transferable.

Question to ask: What happens to your returns if you change the key parameters by 20%?

5. The Purpose of a Backtest Is to Kill Bad Ideas, Not to Sell Good Ones

This might be the single most important sentence in this article.

The purpose of a backtest is to discard bad models, not to improve them.

Anyone using backtesting primarily as marketing material has the incentive structure backwards.

A backtest is a stress test. It exists to find the weaknesses in a strategy before real capital does. If the first thing someone does with a backtest is put it in a pitch deck, they’re using the tool wrong.

We’ve killed our own strategies for the same reasons we flagged in the viral bot teardown. Kitchen-sink features. Overfitting. Single-path testing. Missing economic rationale. The framework exists because these mistakes are easy to make and hard to spot without a systematic process.

The best fund managers I’ve worked with don’t show you their winners first. They show you their process for identifying and discarding losers. That’s the real signal.

Question to ask: How many strategies have you killed, and what was the process that killed them?

How I’m Building This Differently

Every red flag in this article maps to a specific design choice in the Buffett Bot. That’s not a coincidence. The framework came from the build, and the build is shaped by the framework. It includes:

On out-of-sample testing: Our backtesting engine runs nine institutional-grade data feeds across multiple asset classes. Every strategy is validated on data it has never seen. We invested $1,000 in institutional data specifically because free, retail-grade feeds aren’t reliable enough for the kind of cross-validation that catches overfitting.

On regime testing: We test across the 2020 crash, the 2021 bull run, and the grinding sideways action of recent months. If a strategy only works when the wind is blowing in one direction, we kill it.

On economic rationale: Every strategy in the Buffett Bot has to pass our Eight-Gate Validation Framework. Gate 1 is statistical significance. But the gate that kills the most strategies is economic rationale: what structural inefficiency does this exploit, and why should it persist? If we can’t answer that in plain language, the strategy doesn’t ship.

On parameter sensitivity: Our interactive dashboard has adjustable sliders for every parameter. Change a number, the entire simulation reruns. We’ve killed strategies that looked great at one configuration and collapsed at another. That’s the system working as designed.

On killing bad ideas: We publish both wins and failures. The viral bot teardown, where we took apart someone else’s 79% win rate strategy, used the same framework we apply to our own work. We’ve killed our own strategies for the same reasons. The process doesn’t play favorites.

In the next few weeks, we’re expanding the backtesting infrastructure to include live paper trading validation through Alpaca’s API. That means strategies don’t just survive the backtest. They survive real-time market conditions with simulated capital before a single dollar of live money touches them.

The whole build is public. Every iteration, every failure, every course correction. That’s not marketing. It’s a forcing function for the same rigor this article is asking you to demand from everyone else.

The tools to evaluate quantitative strategies aren’t locked behind a Bloomberg terminal. They’re questions. Simple, specific, testable questions. The five in this article will cover 90% of what you need.

If you’re an investor placing capital in someone else’s strategy, these questions are your first line of defense. If you’re a builder creating your own, they’re the standard you should hold yourself to.

Either way, the backtest isn’t the answer.

It’s just the beginning of the conversation.

Have a great week ahead! - Matthew

Matthew Snider is the founder of Block3 Strategy Group, author of “Warren Buffett in a Web3 World,” and publisher of the BitFinance newsletter. He holds a Series 65 and MBA, and has been an active participant in digital asset markets since 2015. This article is for educational purposes only and should not be considered financial advice. Always consult with a qualified professional before making investment decisions.